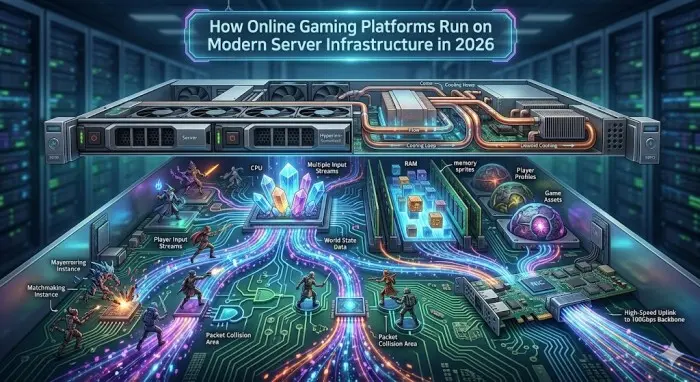

Online gaming is probably the one area that will continually push the limits of server architecture, networking, and operating systems. The pressure on the gaming infrastructure in 2026 is astronomical. Gamers demand sub-20ms latency, large-scale simultaneous multiplayer experiences, and no downtime, as they simultaneously stream 4K assets in real-time.

To the legions of systems administrators, devop engineers, and network architects, it isn’t the game graphics that are magic; it is the server racks. Behind all these perfectly executed headshots, all these real-time casino bets, all these giant multiplayer raids is a very well-coordinated symphony of Linux-driven microservices, BSD-based edge routers, and distributed databases around the globe.

We are going to take a closer look at the current server infrastructure that drives the online gaming infrastructure of 2026.

The death of the monolith: microservices and edge computing

Only a decade ago, numerous gaming platforms were based on monolithic server clusters that were located in several centralized data centers. Nowadays, such a model is completely outdated. The speed of light is a physical limit that is difficult to overcome, and a packet sent between Tokyo and a centralized computer in Virginia and vice versa will always create unacceptable latency.

Edge Computing plays a central role in gaming infrastructure in 2026. Providers make use of a very distributed grid of light nodes that occur as near to the ISP level as they can be.

This is done by means of sophisticated containerization. As Docker strolls, so that Kubernetes can run, the infrastructure of 2026 runs on ultra-lightweight, WASM-based (WebAssembly) containers and microVMs (such as AWS Firecracker or Kata Containers) on custom and stripped-down Linux kernels. These enable the gaming companies to start up localized copies of a game server or lobby within milliseconds, depending on localized player demand, significantly reducing the last-mile routing latency.

Networking in 2026: eBPF and the dominance of QUIC

Context switching between user space and kernel space can also be a bottleneck when dealing with millions of parallel UDP and TCP connections using the traditional Linux kernel network stack.

In order to address this, eBPF (Extended Berkeley Packet Filter) has been completely adopted by modern gaming platforms. With eBPF, there has never been as much packet filtering, routing, or observability because it enables sandboxed programs to directly execute within the Linux kernel without modifying kernel source code or loading kernel modules. The amount of overhead is removed, and the throughput is maximized by now processing and routing player telemetry data at the lowest tier possible by game servers.

Moreover, the transport layer has developed. TCP has been mainly superseded by the QUIC protocol (TCP-based protocol) that is used to create secure and reliable connections. Removing the head-of-line blocking issue that afflicted TCP and TLS 1.3, being an inseparable part of the protocol, QUIC enables gaming clients to have secure, persistent connections even after a mobile user has switched to a 5G/6G cellular network, avoiding connection drops at all.

Transactional integrity and high-availability states

Whereas massive multiplayer online (MMO) games are concerned with ensuring that the player coordinates are delivered as quickly as possible (where a lost packet can be handled by relying on the next packet to provide the correct state), other areas of gaming are confronted with a very different infrastructure demand: absolute transactional integrity.

In the iGaming industry, where real-money betting and live-dealer video streaming are conducted, a single failed state, database rollback, or desync is unacceptable to the server infrastructure. The architecture needed here is more akin to high-frequency financial trading than to traditional video gaming.

An example would be systems that support high-stakes, real-time casino gaming, which will need a system that ensures it is ACID (Atomicity, Consistency, Isolation, Durability) compliant across databases located around the world, while also broadcasting low-latency video to thousands of simultaneous consumers.

These platforms use Distributed NewSQL Databases, like CockroachDB or TiDB, running on highly optimized Linux clusters. Consensus algorithms, like Raft, are used in these databases to guarantee that if a single availability zone fails during a transaction, the financial state and the game outcome are still in their absolutely pristine state and immediately accessible on a backup node.

Why Linux and BSD still reign supreme

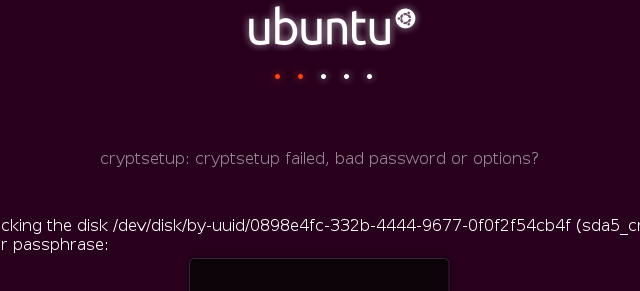

In the proprietary world of game development, the server-side infrastructure remains fiercely open-source, where a Linux distro called Ubuntu dominate.

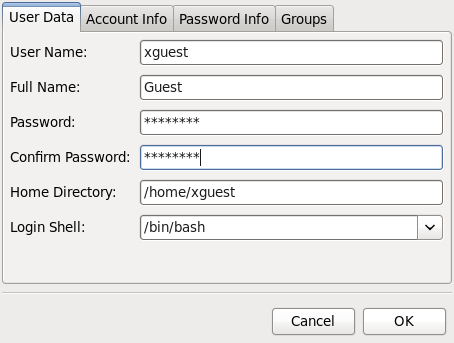

The Linux Ecosystem: The king of gaming application layers is undoubtedly Linux. Custom distributions, frequently developed using Yocto or based on minimal Alpine-like systems, are customized to remove all unnecessary daemons. CPU affinity and Non-Uniform Memory Access (NUMA) pinning are carefully set up by systemd to make sure that game loop processes are assigned dedicated cache and non-interrupted CPU access to avoid micro-stutters.

The BSD Advantage: Although the game logic and container orchestration are managed by Linux, the network edge is still very relevant to Linux, and it is highly dependent on the BSD operating systems, namely FreeBSD and OpenBSD. A variety of gaming companies continue to use the legendary network stack of FreeBSD and pf (Packet Filter) to edge route and load balance, as well as DDoS mitigation. The consistency of FreeBSD under heavy network load, coupled with the rigorous security audit of OpenBSD, make them the perfect gatekeepers in curtailing the massive, AI-driven volumetric DDoS attacks, which are expected to be prevalent in 2026.

AI-driven auto-scaling and zero-trust security

The current infrastructure employs AI-based predictive auto-scaling. Rather than responding to CPU utilization (usually too late), machine learning models are used to examine past performance, social media moods, and world time zones to pre-heat servers and deliver database copies even before the player influx can strike.

Security Game servers are run on a Zero-Trust Architecture. Previously, when a hacker had compromised a frontend web server, he/she could laterally penetrate into the internal network. Microsegmentation in 2026 requires every container, microservice, and database to cryptographically authenticate with one another, typically through mutual TLS (mTLS) that service meshes like Istio or Linkerd manage. This is even in case a game instance is compromised, the blast radius is not only enclosed in that game instance but also contained within that individual microVM.

The ultimate proving ground for server architecture

Gone are the days when the racking of a handful of monolithic servers and hoping that the network will take the load during peak is an option. The current gaming architecture represents a work of art in extracting the very last ounce of performance out of the Linux kernel and the BSD networking stacks. It may be shedding OS overhead with eBPF, pushing microVMs to the extreme ISP limit, or ensuring the absolute security of database transactions in real-time wagering, but the technical bar has never been so high. Finally, to get a real-life preview of what enterprise IT could be like in the future, where the latency is in the single-digit range, and the downtime is just unacceptable, one only needs to look at what is being implemented in production in the gaming industry today.