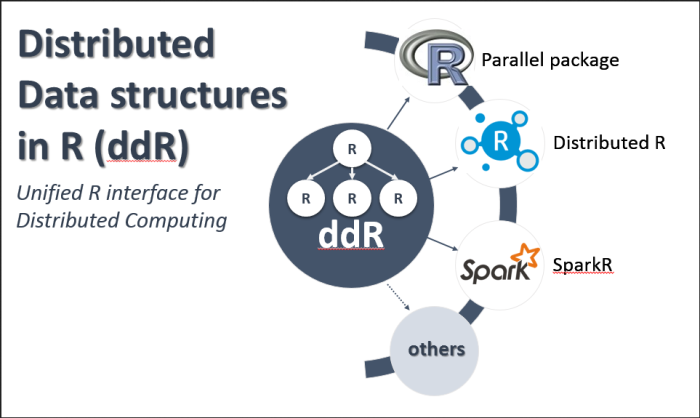

The main goal of the “ddR” package is to provide a simple, generic data-structures, and functions that works across different backends such as R’s “parallel” package, Spark, HP Distributed R, and others (Fig. 1).

For example, you should be able to prototype your application, on your laptop, using “ddR” and R’s parallel package, and then deploy the same application on your production environment running Spark, Distributed R, or something else.

The first release of the “ddR” package is now available on CRAN! You can install it using install.packages(“ddR”) or download the code from the GitHub repo: https://github.com/vertica/ddR.

Currently, it supports the “parallel” package in R and HP Distributed R as backends, and we are working towards incorporating Spark. In addition, we have released two parallel algorithmskmeans.ddR and randomforest.ddR on CRAN which use the ddR API to express the parallel and distributed versions of these algorithms.

Read the complete article here.